Search News

Industry Portal

Popular Tags

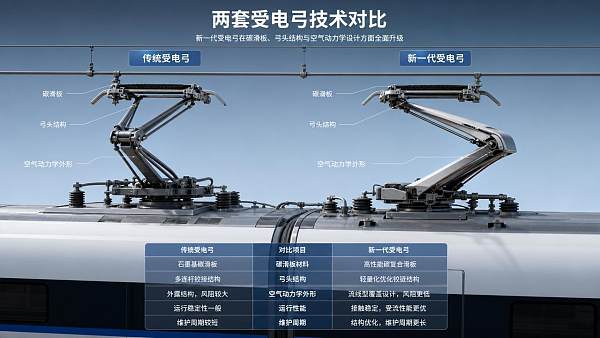

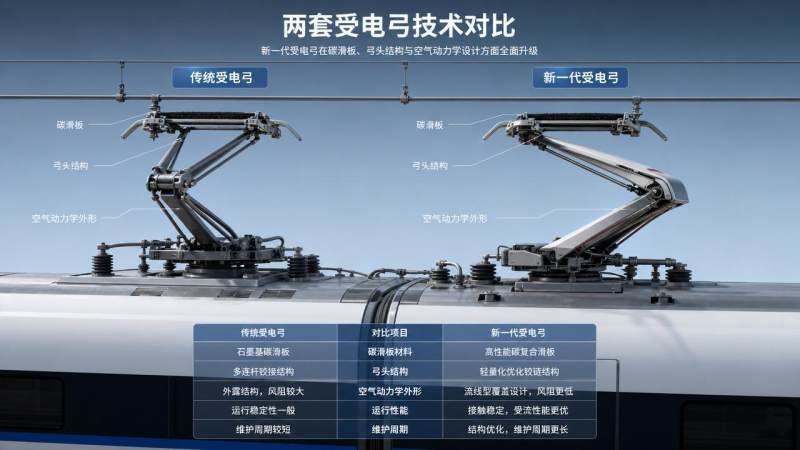

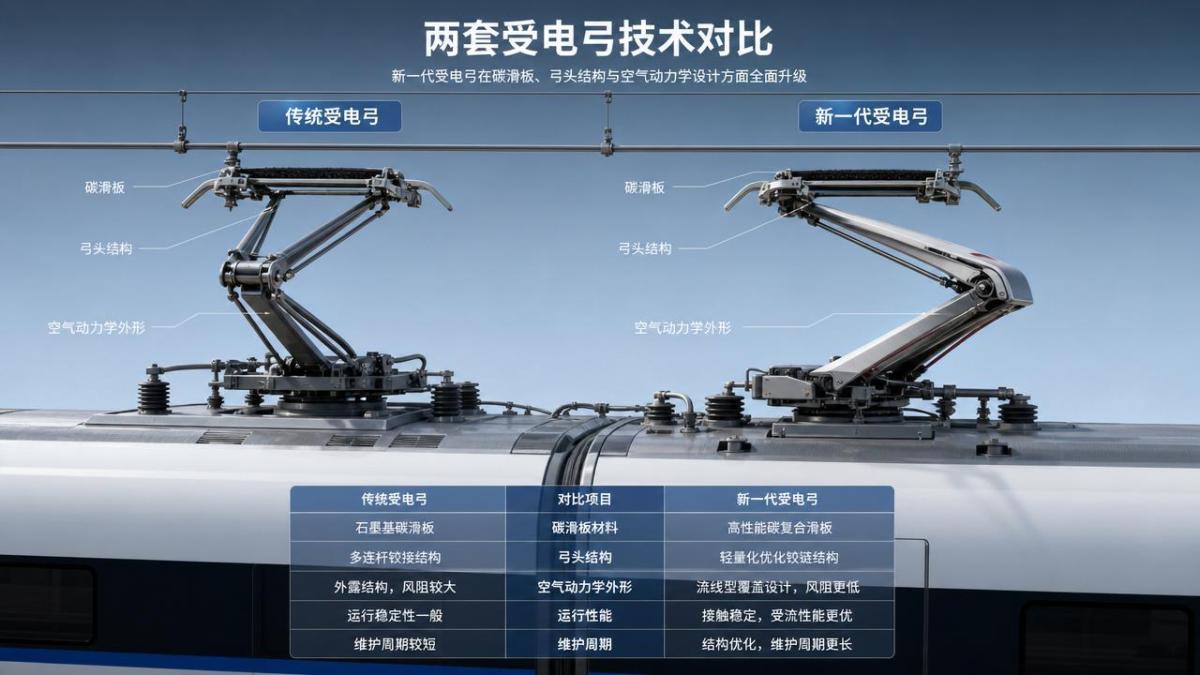

How to Compare Pantographs for High-Speed Rail Upgrades

Author

Time

Click Count

Upgrading high-speed rail power collection demands more than a basic parts check. To compare pantographs effectively, technical evaluators must balance aerodynamic stability, contact reliability, wear behavior, carbon strip performance, and lifecycle cost under real operating speeds above 350 km/h. This guide outlines the key criteria, test indicators, and decision logic needed to select pantographs that support safer, more efficient, and future-ready rail upgrades.

What Pantographs Are and Why They Matter in High-Speed Upgrades

Pantographs are the roof-mounted current collection systems that transfer electrical power from the overhead contact line to the train. In conventional rail, a basic comparison may focus on geometry, static force, and maintenance intervals. In high-speed rail upgrades, however, pantographs become a strategic subsystem because their behavior directly affects traction continuity, overhead line wear, electromagnetic compatibility, operational safety, and network availability.

For technical evaluators, the challenge is not simply choosing the lightest or most advanced-looking option. The right comparison must consider how pantographs perform as part of a complete traction power ecosystem that includes catenary design, trainset aerodynamics, braking profile, signaling reliability, and maintenance capability. This is especially relevant for organizations such as GTOT that observe railway control components with a system-level mindset rather than a single-component view.

At operating speeds above 350 km/h, even small differences in uplift force stability, contact strip material, head suspension response, and aerodynamic noise can produce large effects across the lifecycle of the train and overhead line. That is why comparing pantographs should be treated as a structured technical evaluation instead of a catalog exercise.

Why the Industry Pays Close Attention to Pantographs

The rail industry focuses on pantographs because the power collection interface is one of the few places where mechanical motion, electrical transfer, aerodynamic disturbance, and environmental exposure meet continuously during operation. Unlike many onboard components, pantographs interact every second with infrastructure owned and maintained by the wider rail system. A weak match between train and catenary can increase arcing, accelerate wire wear, reduce energy efficiency, and trigger service disruption.

Today’s high-speed upgrades also face tighter expectations around digitalization, asset value, decarbonization, and safety. Operators want higher average speeds without increasing maintenance windows. Infrastructure managers want predictable wire life. Train manufacturers want lower aerodynamic drag and stable current collection under crosswind conditions. Evaluators therefore compare pantographs not only for technical compliance but also for system resilience and cost of ownership.

This trend is reinforced by more complex global procurement environments. In competitive tenders, a pantograph proposal must often show evidence from EN and IEC-related testing, fleet references, sensor integration readiness, and compatibility with advanced diagnostics. For EPC contractors, rolling stock integrators, and technical advisors, the comparison process must be rigorous enough to support long-term credibility.

Core Evaluation Logic for Comparing Pantographs

A high-quality comparison starts with one principle: evaluate pantographs under the real operating envelope, not under isolated laboratory assumptions. A strong candidate on paper may underperform when exposed to high crosswinds, tunnel pressure waves, winter icing, mixed catenary quality, or frequent transitions between power supply sections.

Technical evaluators should typically compare pantographs across six linked dimensions: contact quality, aerodynamic behavior, mechanical durability, strip and wire wear, maintainability, and total lifecycle economics. These dimensions are interdependent. For example, increasing contact force may improve current continuity but also raise wear and noise. Similarly, reducing mass may improve dynamic response while complicating structural fatigue performance. The best decision usually comes from balancing trade-offs rather than maximizing a single indicator.

Key Technical Criteria That Separate One Pantograph from Another

Contact reliability under real speed

The first comparison point is whether the pantograph can maintain stable contact at target speed and under representative track, line, and climate conditions. Evaluators should examine mean contact force, standard deviation, percentage of contact loss, arc frequency, and current collection quality during acceleration, cruise, and transition zones. Good pantographs do not simply maintain contact; they do so with controlled force fluctuation that reduces both strip wear and catenary damage.

Aerodynamics and uplift behavior

In high-speed service, the pantograph is strongly affected by airflow around the train roof. Head geometry, frame shape, fairing integration, and mounting position all influence uplift and vibration. Comparative testing should include open-air and tunnel scenarios, crosswind simulation, and interaction with adjacent roof equipment. A pantograph that looks efficient in static design may create unstable force peaks when exposed to train wake effects or passing trains on neighboring tracks.

Carbon strip and wear pairing

Carbon strip design deserves special attention because it affects conductivity, thermal behavior, friction, arc resistance, and maintenance interval. Technical evaluators should compare strip hardness, impregnation strategy, current capacity, wear rate, and compatibility with the actual contact wire material used on the target network. The goal is not simply long strip life. The better question is whether the strip-wire pair delivers acceptable wear on both sides of the interface over time.

Mechanical architecture and maintainability

Different pantographs may use varying spring systems, pneumatic or electric actuators, head suspension designs, and frame materials. These choices influence response speed, failure modes, inspection access, and spare parts strategy. In an upgrade project, maintainability can be as important as pure dynamic performance because fleet workshops must support repeatable servicing without excessive downtime or specialized tooling.

Monitoring and digital readiness

Modern pantographs increasingly support condition monitoring through force sensors, strip wear measurement, shock detection, camera inspection support, and onboard data logging. For organizations pursuing digitalized asset management, these features matter. A technically strong pantograph that cannot support predictive maintenance may become less attractive than one that slightly underperforms in a single benchmark but provides better long-term data transparency.

Typical Pantograph Comparison Scenarios in Rail Upgrades

Not every upgrade project compares pantographs for the same reason. The evaluation framework should reflect the business and technical context. Some projects focus on speed increase, while others target reliability improvement, fleet renewal, cross-border compatibility, or reduced infrastructure wear.

How to Read Test Data Without Making the Wrong Decision

A common mistake in comparing pantographs is to rely on isolated peak values or supplier-preferred test conditions. Technical evaluators should ask whether the results were generated on representative catenary types, realistic train roof layouts, and credible environmental envelopes. Data should be interpreted in context: a low average wear rate in benign conditions may be less useful than moderate wear under demanding crosswind and tunnel conditions that better match real service.

It is also important to compare not only nominal performance but performance consistency. Pantographs with narrow tolerance bands, stable repeatability, and clear degradation signatures are often easier to manage over decades of fleet operation. In upgrade decisions, predictable behavior can be more valuable than an impressive best-case result.

When available, evaluators should combine bench testing, simulation, type-test documentation, route-specific dynamic testing, and maintenance history from existing fleets. This evidence-based approach reduces the risk of overvaluing design claims that have not been proven in service.

Practical Recommendations for Technical Evaluators

First, define the operating envelope before comparing pantographs. Include maximum speed, catenary type, climate, tunnel ratio, crosswind exposure, current demand, and maintenance strategy. Without this baseline, even detailed technical data can mislead.

Second, score pantographs as part of a system. A component that performs well in isolation may not fit the actual trainset roof profile, infrastructure tolerance, or depot capability. Third, examine wear as a shared interface issue. The cheapest strip is rarely the cheapest solution if it accelerates overhead line degradation. Fourth, include digital monitoring capability in the comparison matrix because future rail upgrades increasingly depend on measurable asset health. Finally, validate supplier claims with service references, failure data, and maintainability evidence rather than brochure language alone.

For organizations working in strategic intelligence, railway component evaluation, or international transport infrastructure, these practices strengthen both technical decision quality and bid credibility. They also align with the broader industry move toward safer, more efficient, and more transparent mobility systems.

Final Perspective

Comparing pantographs for high-speed rail upgrades is ultimately about understanding the quality of the train-to-wire relationship under demanding real-world conditions. The best pantographs are not defined by a single metric. They are defined by balanced current collection, aerodynamic control, compatible wear behavior, maintainable design, and readiness for digital asset management.

If your evaluation team wants stronger technical confidence, build your review around operating context, system interaction, and lifecycle evidence. That approach helps decision-makers choose pantographs that do more than pass a specification. It helps them support durable performance across the full land-based transport network that modern high-speed rail now demands.

Recommended News